Key Takeaways:

- AI-generated war imagery is no longer fringe content but a scalable system producing realistic but entirely fabricated visual events.

- These synthetic images and videos are designed to mimic authentic conflict reporting and enter the same distribution pathways as genuine journalism.

- Once circulated, fabricated visuals can shape perception before verification occurs, reinforcing narratives that may later be difficult to correct.

For decades, the credibility of war reporting has rested on a simple assumption: that the camera captures something that actually happened.

That assumption is no longer reliable.

A growing volume of AI-generated war imagery is now being produced, distributed, and consumed at scale. These images do not document events. They simulate them. Yet they are often presented in formats that closely resemble authentic news coverage, making them difficult to distinguish from real reporting in fast-moving information environments.

This represents a structural shift. The issue is no longer limited to how real images are selected or framed. Entire visual events can now be constructed before they are ever reported.

From Documentation to Fabrication

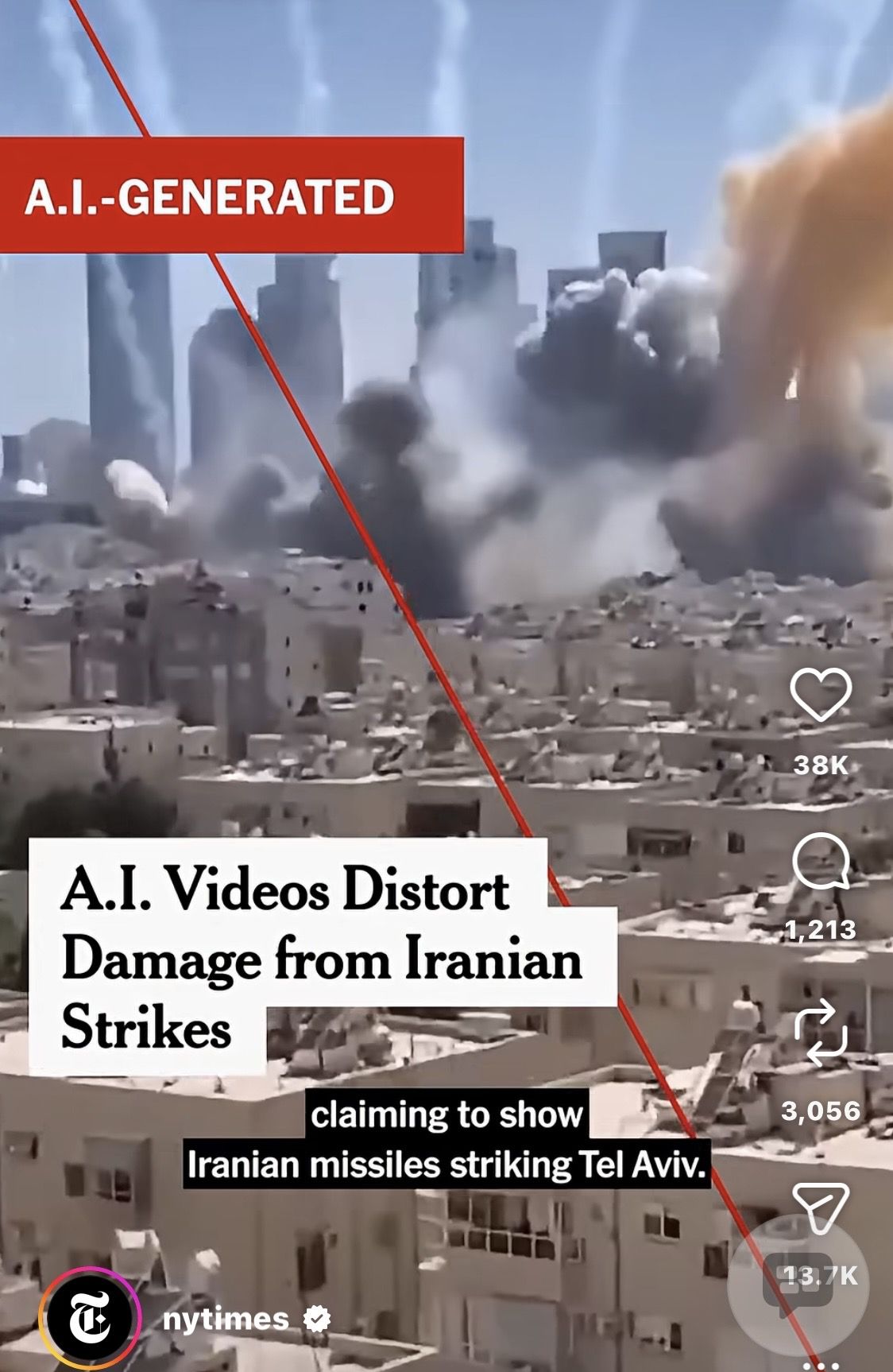

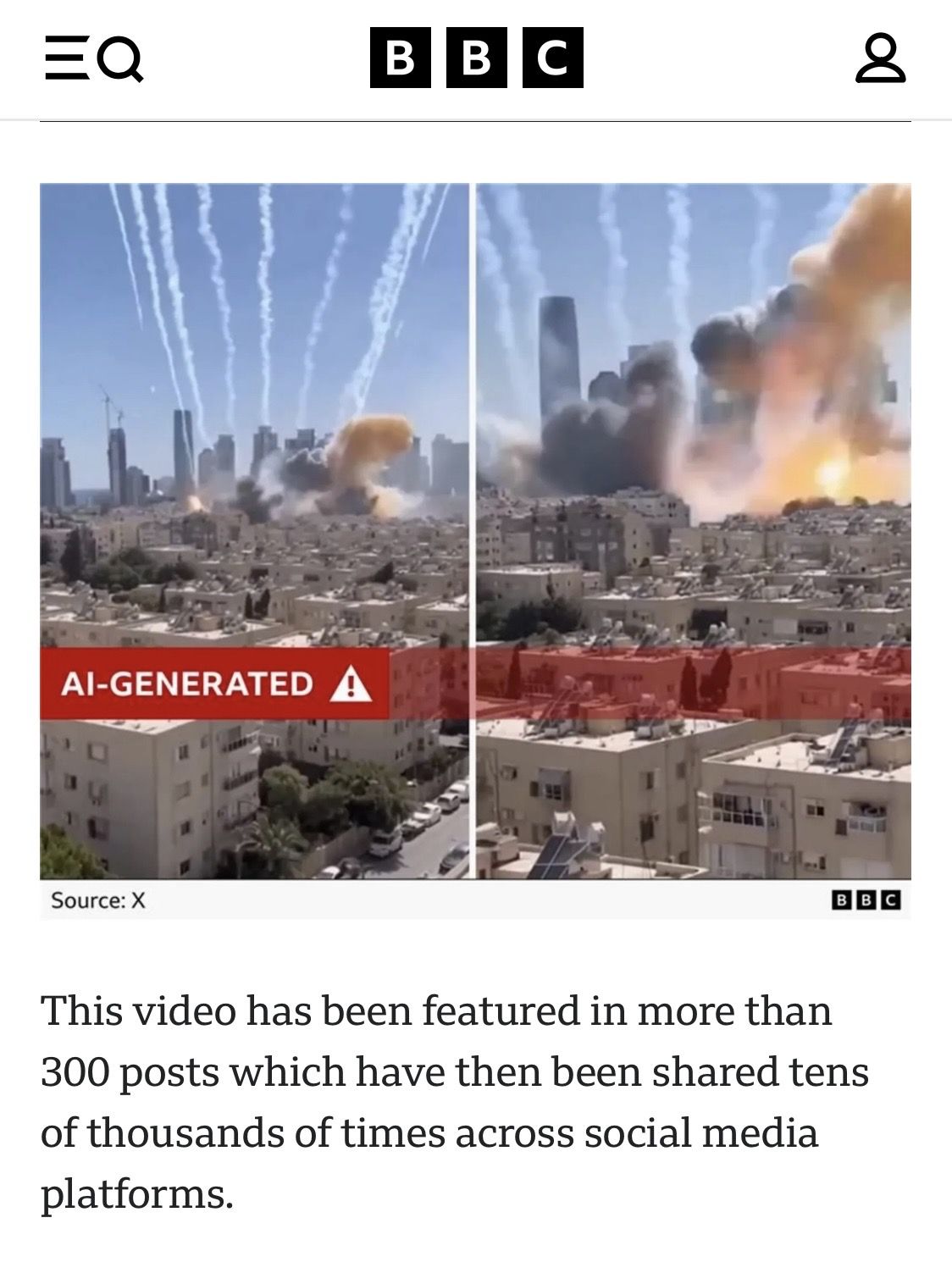

Recent analysis by the Foundation for Defense of Democracies highlights the scale of this shift.

More than one hundred pro-Iran deepfake videos have circulated in recent weeks alone. These clips depict missile strikes, destroyed infrastructure, and battlefield outcomes that did not occur.

The imagery is designed to replicate the visual language of real conflict reporting. Explosions, aerial perspectives, urban destruction, and emergency scenes are rendered with increasing realism.

To the untrained viewer, these visuals appear credible. They look like the same kinds of images routinely broadcast by global media outlets.

The difference is not in how they look.

The difference is that they never happened.

The Mechanism of Synthetic War Imagery

The production of these visuals follows a clear and repeatable structure.

- Step 1: Create the event

AI systems generate highly realistic scenes of conflict, including strikes, casualties, and destruction. These scenes are constructed to match familiar visual patterns seen in real war coverage. - Step 2: Scale the output

Large volumes of content are produced quickly. Variations of the same theme are generated to create the impression of widespread and consistent events. - Step 3: Embed narrative cues

The imagery is aligned with specific messages. These may include claims of military success, civilian harm, or strategic dominance. The visuals are built to reinforce those claims before any factual verification takes place. - Step 4: Distribute across networks

The content is circulated through aligned digital ecosystems, including state-linked media environments and amplification networks. Algorithms accelerate visibility and engagement.

By the time the material reaches a wider audience, it already carries the appearance of legitimacy.

Why Synthetic Imagery Is Effective

The power of this content lies in its familiarity.

It does not look like propaganda in the traditional sense. It looks like news.

Viewers are conditioned to trust visual evidence, particularly in conflict reporting, where images often carry more weight than text. When synthetic visuals adopt the same composition, pacing, and aesthetic as authentic footage, they can bypass initial skepticism.

The speed of distribution compounds the effect. Images are seen, shared, and internalized before verification processes can catch up.

At that point, the visual impression has already taken hold.

Amplification and Ecosystem Support

The spread of synthetic war imagery is not random.

According to the same analysis, much of this content is produced within Iranian-aligned networks and then amplified through broader digital ecosystems, including Russian and Chinese channels.

This layered amplification ensures that the material reaches global audiences quickly and repeatedly.

The result is not just visibility, but reinforcement.

Repeated exposure creates familiarity. Familiarity creates perceived credibility.

From Fabrication to Perception

Once these images enter the public domain, they begin to function in the same way as authentic visuals.

They are:

- Shared across social platforms

- Referenced in commentary and analysis

- Embedded into ongoing narratives

- Used to support claims about real-world events

At this stage, the distinction between real and synthetic becomes increasingly difficult for audiences to maintain.

The image has already done its work.

A Continuum, Not a Separate Problem

This development does not replace the issues identified in earlier analyses of visual media. It extends them.

Previous articles in this series have shown how:

- Cropping can remove context

- Timing can distort meaning

- Selection can reshape perception

Synthetic imagery operates within the same framework.

The difference is that the starting point is no longer a real event. It is a constructed one.

Yet once introduced into the information environment, it behaves in exactly the same way as authentic material that has been selectively framed.

Conclusion

The emergence of AI-generated war imagery completes a chain that has been developing across modern media systems. A visual narrative no longer needs to begin with a real event. It can begin with fabrication, pass through amplification networks, and arrive in front of audiences carrying the same authority as authentic reporting.

From there, the process becomes familiar. Images are selected, framed, and integrated into broader coverage. Headlines align with visuals. Visuals reinforce interpretation. Perception is shaped.

This creates a continuous pipeline in which fabrication, selection, and publication are no longer separate stages but interconnected parts of the same system. The responsibility for what audiences ultimately see is therefore shared across that entire chain, from creation to distribution to editorial use.

Understanding that chain is now essential. Because in the current environment, the question is no longer whether an image has been edited or misframed.

It is whether the event that is shown ever happened at all.

Liked this article? Follow HonestReporting on Twitter, Facebook, Instagram and TikTok to see even more posts and videos debunking news bias and smears, as well as other content explaining what’s really going on in Israel and the region. Get updates direct to your phone. Join our WhatsApp and Telegram channels!