Key Takeaways:

- AI-generated imagery is no longer confined to fringe platforms. It is entering mainstream journalism and shaping public discourse before verification occurs.

- A synthetic image falsely linking Jeffrey Epstein and Israeli President Isaac Herzog was amplified before basic forensic checks were applied.

- Visual manipulation is not new. What is new is scale, speed, and the erosion of editorial gatekeeping in a media ecosystem already driven by emotional imagery.

Introduction: When Synthetic Imagery Crosses the Threshold

Earlier this month, two separate fabricated images began circulating online involving Jeffrey Epstein.

The first was a clearly synthetic image purporting to show Epstein walking in Tel Aviv in recent days, flanked by bodyguards and surrounded by Israeli street signage. This image circulated widely on social media.

It was false.

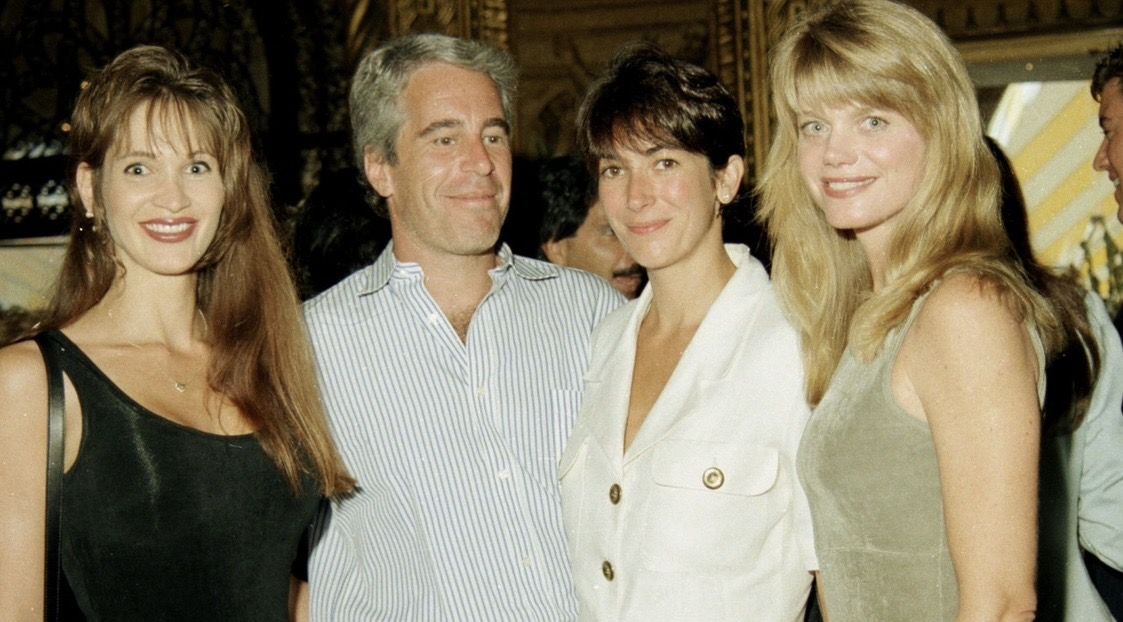

The second image was a composite that digitally inserted Israeli President Isaac Herzog into a well-known historical photograph of Epstein and Ghislaine Maxwell from the 1990s.

That composite image was reposted by Gabrielle Sivia Weiniger, a journalist covering the Middle East for The Times of London, before she later clarified publicly that it was an AI-generated fake and apologized for reposting it without verifying the source.

Related reading:

The two images are separate fabrications.

The journalistic failure concerns the second.

The apology came. The damage had already been done.

This incident is not about one tweet. It is about a structural vulnerability in modern journalism: the collapse of visual verification in the age of synthetic imagery.

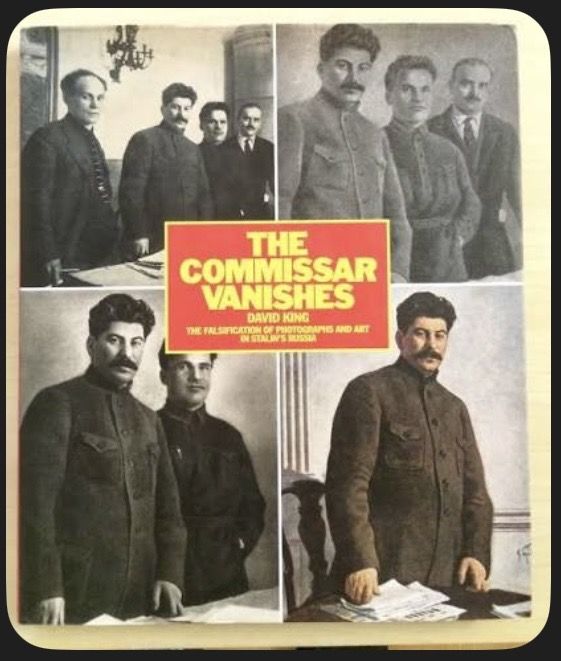

Visual Manipulation Is Not New

Photographic manipulation predates artificial intelligence.

In the Soviet Union, political enemies were erased from photographs. Darkrooms were instruments of power. Retouching altered historical memory. As documented in studies such as Inside Stalin’s Darkroom, photography has long been vulnerable to manipulation in the service of narrative control.

What has changed is not the impulse to manipulate.

What has changed is access.

What once required state infrastructure, chemical expertise, and physical negatives can now be achieved by anyone with generative software. Synthetic realism is scalable. It is instantaneous. It is globally distributable.

The barrier to entry has collapsed.

Editorial discipline has not caught up.

Case Study One: The Tel Aviv Crossing Image (Social Media Fabrication)

The “Epstein crossing the road” image is instructive as a forensic teaching example.

It depicted Epstein walking across what appeared to be a Tel Aviv street, accompanied by a symmetrical security detail. Street signage appeared in Hebrew, Arabic, and English, suggesting documentary authenticity.

The flaws were visible.

1. Textual Anomalies

The Hebrew lettering was malformed and inconsistent with Israeli municipal typographic standards. The Arabic text exhibited structural irregularities. The English transliteration appeared mechanically generated.

Israeli street signage follows a strict formatting hierarchy. Script placement, spacing, and typeface conventions are standardized.

AI systems often generate text-like forms that resemble language without adhering to structural rules.

The signage was a giveaway.

2. Over-Symmetrized Staging

The bodyguards were evenly spaced, compositionally balanced, cinematically arranged. Real protective formations in pedestrian zones are rarely so geometrically tidy.

Generative systems favor visual symmetry over operational realism.

3. Optical Inconsistencies

Uniform smoothing across motion zones, inconsistent edge definition, and depth transitions lacking optical coherence were detectable without specialized software.

None of these indicators required advanced forensic tools.

They required attention.

This image was never part of a mainstream publication. It demonstrates how easily synthetic realism can circulate online.

Case Study Two: The Epstein–Herzog Composite (Mainstream Amplification)

The more consequential failure involved a composite image digitally inserting Isaac Herzog into a historic photograph of Epstein and Ghislaine Maxwell taken decades ago.

This is the image reposted by Gabrielle Sivia Weininger.

The forensic indicators were equally visible.

1. Temporal Impossibility

The base image of Epstein and Maxwell dates to the 1990s. Herzog’s current appearance does not correspond to the era of the original photograph.

No contextual explanation bridged that gap.

Chronology alone should have triggered verification.

2. Compositional Discontinuity

Herzog’s head and torso exhibit lighting characteristics inconsistent with the ambient tone of the original image. The facial sharpness and color temperature do not fully match those of the surrounding subjects.

Compositing artifacts are subtle but present.

3. Anatomical Distortion

Epstein’s extended arm, which appears to be taking a selfie, displays unnatural elongation relative to shoulder alignment and torso perspective. The spatial geometry suggests digital manipulation rather than authentic lens distortion.

4. Depth and Edge Integration

The insertion lacks full depth-of-field coherence. Edge blending around Herzog’s outline shows minor integration inconsistencies when compared to adjacent subjects.

None of this required advanced AI detection software.

It required a plausibility assessment.

Take an image of Isaac Herzog from circa 2021 and manipulate a photo taken at Mar-a-Largo in 1995 and voila – you get an image that only Gabrielle Weiniger (and a bunch of antisemitic conspiracy theorists) would believe. pic.twitter.com/KE4j2Q96QI

— HonestReporting (@HonestReporting) February 9, 2026

The Journalistic Failure

Gabrielle Sivia Weiniger later acknowledged the image was an AI fake and apologized for reposting it without checking the source.

That admission matters.

But so does sequence.

In journalism, the initial insinuation travels further than the correction. Audiences remember the visual association. Retractions rarely reach the same velocity.

This is not about intent.

It is about standards.

Before amplifying an image suggesting geopolitical implications, baseline checks must occur:

- Source verification

- Reverse image search

- Metadata review

- Chronological plausibility assessment

- Lighting and compositional scrutiny

If these were not conducted, why not?

If they were conducted, how did this pass?

The issue is not that AI exists.

The issue is that verification did not precede amplification.

Why This Matters in a Conflict Environment

In previous articles in this series, we examined how imagery shapes public understanding before context catches up.

AI introduces a second distortion layer.

If real imagery already frames moral judgment, synthetic imagery can now be injected seamlessly into public discourse.

When journalists amplify composite visuals without forensic scrutiny, they weaken trust not only in their own reporting but in visual documentation itself.

The danger is cumulative.

A Practical Framework for Detection

Synthetic imagery often reveals itself through:

- Text anomalies, especially in multilingual signage

- Over-symmetrical human arrangement

- Inconsistent lighting gradients

- Edge blending irregularities

- Chronological implausibility

- Absence of a verifiable source chain

- Metadata vacuum

These are first-level editorial checks.

If newsrooms cannot institutionalize them, synthetic misinformation will repeatedly outrun verification.

Philosophical Escalation: From Evidence to Claim

Historically, photographs functioned as presumptive evidence.

Today, images increasingly function as claims.

Verification must move from pixel-level trust to process-level scrutiny.

The Soviet darkroom removed individuals from history.

Generative AI inserts them.

Both manipulate memory. Both reshape narrative. Both exploit visual authority.

Conclusion

The synthetic Epstein–Herzog composite is not an isolated embarrassment.

It is a warning.

When journalists amplify fabricated visuals without basic forensic review, the damage extends beyond a single correction. It erodes trust in visual documentation itself.

In an era where conflicts are increasingly mediated through images, journalism must become more rigorous, not less.

If images are no longer inherently evidentiary, verification becomes the profession’s last line of defense.

Otherwise, the next synthetic image will not merely mislead.

It will define reality before anyone thinks to question it.

Liked this article? Follow HonestReporting on Twitter, Facebook, Instagram and TikTok to see even more posts and videos debunking news bias and smears, as well as other content explaining what’s really going on in Israel and the region. Get updates direct to your phone. Join our WhatsApp and Telegram channels!