Key Takeaways:

-

Generative AI has erased the line between documentation and fabrication, enabling the mass production of hyper-realistic but entirely fake Gaza “atrocity” footage.

-

Viral AI “slop” exploits emotion and confirmation bias, spreading faster than fact-checks and reinforcing preexisting narratives about Israel.

-

Synthetic flood scenes, injured children, and cinematic bombing clips reveal how AI-driven propaganda is eroding public trust in all visual evidence.

“The camera never lies,” goes the old adage.

For decades, that assumption underpinned modern journalism. Video could be edited, framed selectively, even staged – but it still captured something that physically occurred. Even the most manipulative footage contained a baseline reality: the people filmed existed, the scene happened, the light struck a lens.

That premise no longer holds.

The explosion of generative artificial intelligence has collapsed the boundary between documentation and fabrication. Today, anyone with access to widely available platforms can generate highly realistic video footage from nothing more than a written prompt. No camera. No scene. No event.

What emerges is not cartoon animation or obvious CGI. It is cinematic, textured, emotionally manipulative pseudo-reality – footage that looks disturbingly authentic to the untrained eye.

And in the information war surrounding Gaza, this technology has become a weapon.

From Documentation to Fabrication

AI-generated videos are often imperfect. Fingers bend unnaturally. Limbs duplicate. Shadows misalign. Text blurs into nonsense. Physics occasionally falters.

But these flaws are subtle, especially on fast-scrolling social media feeds where outrage spreads faster than scrutiny.

Internet users have coined a term for this flood of low-quality synthetic content: “AI slop.” It describes mass-produced, emotionally charged, machine-generated material designed for virality rather than truth.

The label is apt.

Because what we are seeing is not merely experimentation with technology. It is the industrial-scale production of fabricated atrocity imagery.

Gaza as a Testing Ground

No conflict has been more saturated with emotionally charged imagery than Gaza. The visual economy of this war – rubble, children, destruction – already lends itself to narrative weaponization.

Generative AI has supercharged it.

Fabricated videos purport to show Israeli soldiers committing grotesque acts. Imaginary victims appear in staged hospital corridors. Synthetic rescue scenes circulate with dramatic music and captions framing them as real-time evidence of war crimes.

They are not real.

Yet they are shared as proof.

In many cases, the tell-tale AI distortions remain visible. Extra fingers. Warped facial expressions. Inconsistent lighting. But these inconsistencies are ignored or rationalized because the content aligns with prior belief.

The psychology is not complicated.

When viewers already believe Israel is uniquely malevolent, AI-generated fiction does not trigger skepticism, but confirms expectation.

And confirmation spreads faster than correction.

The Incentive Structure

AI slop thrives for three reasons:

- Speed: Synthetic footage can be produced instantly, without access to Gaza or risk to a cameraman.

- Scale: Dozens of fabricated scenes can be generated in hours.

- Emotional Optimization: Prompts can be engineered for maximum outrage.

Related reading: When the Image Is No Longer Evidence: AI, Propaganda, and the Collapse of Visual Trust

And unlike earlier eras of staged propaganda – sometimes referred to as “Pallywood” – AI requires no actors, no coordination, no physical staging. The fabrication happens entirely in code.

The result is a flood of imagery untethered from reality but emotionally indistinguishable from it.

The AI Lies That Went Viral

The “Great Gaza Floods”

In December, as heavy rain fell across Israel and Gaza, social media platforms were suddenly saturated with videos purporting to show displaced Palestinians drowning inside tent encampments in southern Gaza.

The imagery was calibrated for maximum emotional impact.

Children standing waist-deep in water.

Children shivering inside submerged tents.

Children pleading directly into the camera: “We are scared. Where do we go? We have nothing. Someone help us.”

The videos were fake.

HonestReporting has deliberately chosen not to embed or repost them here. Reproduction feeds the algorithmic machine that made them viral in the first place. But archived copies and screenshots reveal clear indicators of computer generation.

In one widely circulated clip, four children stand in what is supposed to be freezing floodwater, while a girl addresses the camera in a strangely mechanical cadence. At first glance, the scene appears plausible, even harrowing. But a closer look reveals multiple inconsistencies. The children’s facial movements do not quite align with the words being spoken, and their bodies remain oddly rigid despite allegedly standing in cold, waist-deep water. Large sections of their clothing appear inexplicably dry, as though untouched by the surrounding flood. In the background, a Palestinian flag flutters in the wind without any visible pole or structure anchoring it, while electrical wires drift through the frame, suspended mid-air without discernible connection points.

Other clips showed children inside tents filled almost to their necks with rainwater, which is entirely implausible.

Humanitarian tents are made of thin fabric and lightweight frames. They do not form watertight basins capable of holding thousands of liters of water vertically. Rainfall would collapse the structure or drain through ground saturation long before a tent could become a freestanding pool.

Some videos even showed rain falling inside the tent while the canvas above appeared intact.

Yet the clips amassed millions of views.

The Baby “Trapped Under Rubble”

Another image spread rapidly across platforms: a grotesquely injured infant allegedly trapped beneath debris.

The image was designed to shock.

It had all the hallmarks of AI fabrication – hyper-detailed rubble textures, exaggerated facial distortion, theatrical lighting.

A closer look revealed the most obvious tell: extra fingers protruding from the lower corner of the frame.

And yet the image appeared on the front page of the French newspaper Libération. It was also photographed being held aloft by protesters in France and circulated by mainstream outlets, including Le Figaro, in coverage of demonstrations.

By the time corrections emerged, the damage had already been done.

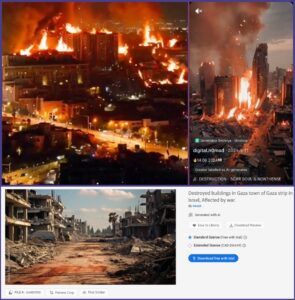

Israeli “Indiscriminate Bombing”

Perhaps the most visually dramatic wave of AI slop involved towering infernos – entire neighborhoods engulfed in cinematic walls of flame.

These clips were presented as proof of Israel’s “indiscriminate bombing” in Gaza and, later, in Lebanon.

They were shared as visual confirmation of disproportionate destruction. Yet forensic inconsistencies quickly surfaced. The fire behavior defied basic combustion patterns, with flames rising in eerily symmetrical columns across entire building facades. Explosions appeared without visible blast waves, and structures disintegrated in ways inconsistent with real munitions impact. The lighting resembled a high-budget cinematic rendering rather than the uneven glare of battlefield footage. Real conflict imagery is chaotic, imperfect, and visually messy. These scenes were too composed – too symmetrical, too dramatic – looking less like war reporting and more like a disaster film trailer. And yet many users shared them without hesitation, precisely because they confirmed what they already believed.

How You Can Spot AI-Generated Fakes

Generative video is improving rapidly. Detection will become harder.

But for now, most AI slop still betrays itself.

When assessing potentially AI-generated footage, the first place to look is often the human body itself. Generative systems still struggle with hands and fingers, producing extra digits, fused knuckles, or subtly malformed nails that do not quite align with anatomy. These distortions are not always obvious at a glance, but they frequently reveal themselves on closer inspection.

Physics offers another reliable clue. Objects may float without support, water may behave more like gelatin than liquid, or fire may burn in unnaturally uniform walls across entire building facades. Rain might fall indoors without any visible structural breach.

Clothing and fabric can also expose synthetic imagery. In supposedly wet environments, garments may remain inexplicably dry. Wind may ripple a flag while leaving surrounding objects unaffected. Fabric can appear weightless or oddly rigid, lacking the subtle irregularity of real movement.

Lighting, too, often gives the game away. Shadows may contradict visible light sources, while scenes are bathed in dramatic, cinematic contrast more reminiscent of digital rendering software than of chaotic battlefield conditions. Real conflict footage is visually uneven; AI-generated scenes are often overly polished.

Finally, consider the emotional architecture of the scene. Many AI fakes are engineered for maximum pathos: crying children delivering implausibly scripted lines directly to camera, devastation framed with almost theatrical precision, expressions frozen in perfectly captured anguish.

But visual inspection alone is sometimes not enough.

Online tools such as Hive Moderation allow users to upload suspicious images or videos and receive an assessment of whether the content is likely synthetic or AI-enhanced. No system is perfect, but running viral footage through independent detection tools adds an important layer of scrutiny.

Beyond that, ask basic logistical questions. How was it filmed? Where did it originate? Does it have a traceable provenance, or does it simply appear online already packaged for outrage? Authentic footage typically leaves an evidentiary trail – metadata, consistent geography, corroborating angles. When that chain is absent, skepticism is not cynicism. It is responsibility.

The New Information Battlefield

AI slop does not replace real suffering. It exploits it, piggybacking on genuine tragedy and flooding the digital space with synthetic horror that is faster to produce, easier to share, and harder to retract.

In a conflict already shaped by staged imagery and narrative activism, generative AI represents a qualitative escalation. The danger is not simply deception, but erosion. When audiences can no longer distinguish between documentation and fabrication, every image becomes suspect, including authentic evidence of wrongdoing. That uncertainty serves propagandists.

The camera once required a scene. Now it requires only a prompt. And in Gaza’s information war, that shift matters.

Liked this article? Follow HonestReporting on Twitter, Facebook, Instagram and TikTok to see even more posts and videos debunking news bias and smears, as well as other content explaining what’s really going on in Israel and the region. Get updates direct to your phone. Join our WhatsApp and Telegram channels!